Overview

- React to discoveries: Scan new subdomains as they’re found

- Chain workflows: Connect reconnaissance → probing → scanning

- External integration: Receive webhooks from GitHub, CI/CD, or custom tools

- Real-time automation: Process findings as they occur

Event Architecture

Event Structure

Events carry structured data through the system:Event Flow

Backpressure Handling

The event system protects against overload:| Parameter | Value | Description |

|---|---|---|

| Queue Size | 1000 | Maximum events buffered |

| Timeout | 5 seconds | Wait time when queue is full |

| Behavior | Drop | Events dropped if queue remains full |

Emitting Events

From Workflow Functions

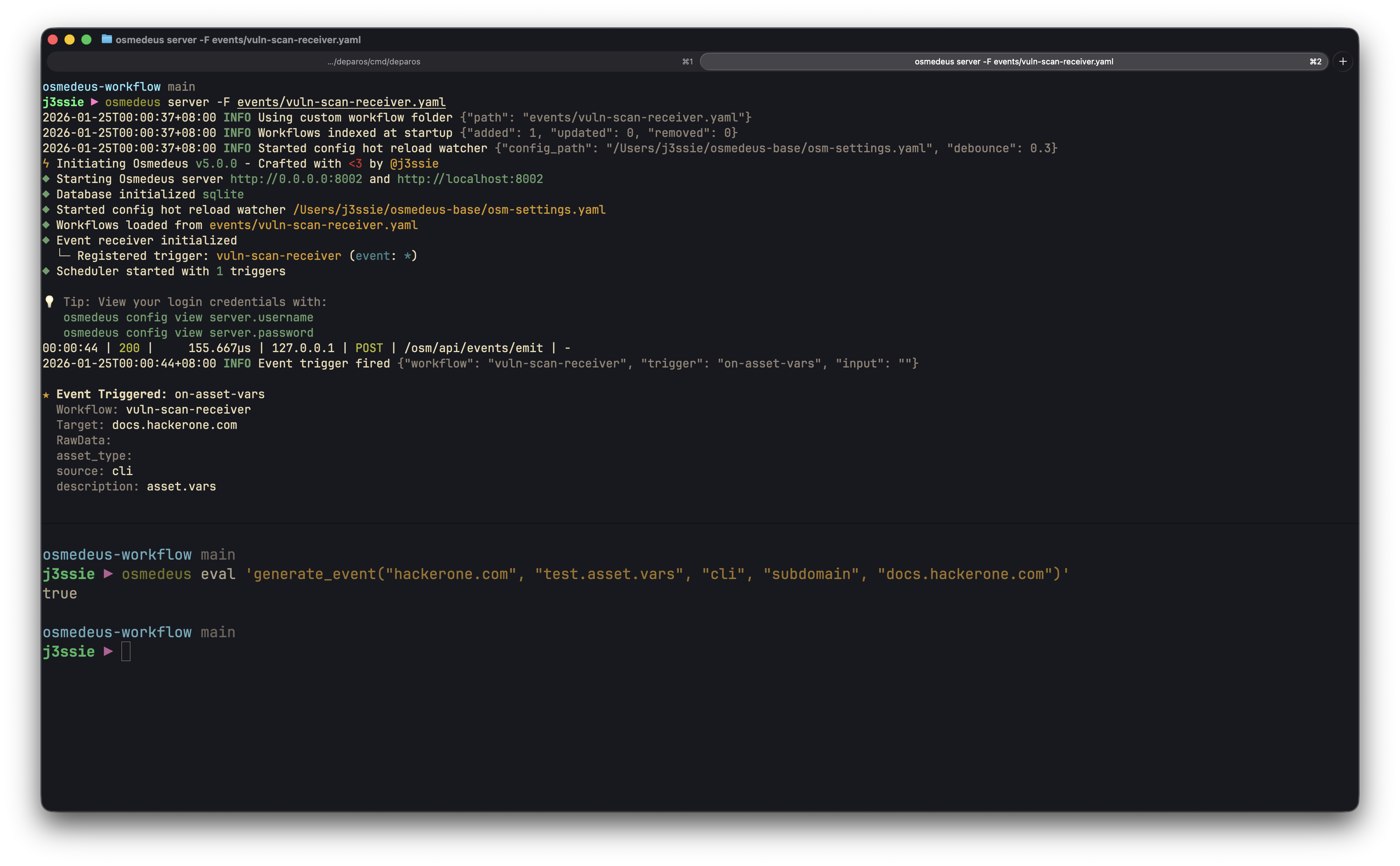

Use thegenerate_event function to emit events from workflows:

generate_event(workspace, topic, source, data_type, data)

Emit a single structured event.workspace- Workspace identifier for the eventtopic- Event topic/category (e.g., “assets.new”)source- Event source (e.g., “nuclei”, “httpx”)data_type- Type of data (e.g., “subdomain”, “url”, “finding”)data- Event payload (string or object)

boolean - true if event was sent successfully

generate_event_from_file(workspace, topic, source, data_type, path)

Emit an event for each non-empty line in a file.workspace- Workspace identifier for the eventstopic- Event topic/categorysource- Event sourcedata_type- Type of datapath- File path containing data (one item per line)

integer - count of events successfully generated

Webhook Functions

Send events to external webhook endpoints configured in settings:notify_webhook(message)

Send a plain text message to all configured webhooks.send_webhook_event(eventType, data)

Send a structured event to all configured webhooks.From External Systems (Webhooks)

Receive webhooks via the API to trigger workflows:Event Triggers

Trigger Configuration

Configure event triggers in workflow YAML:EventConfig Fields

| Field | Type | Description |

|---|---|---|

topic | string | Event topic to match (supports glob patterns: *, ?, [; empty = all) |

filters | []string | JavaScript expressions (all must pass) |

filter_functions | []string | JavaScript expressions with utility functions available (all must pass) |

dedupe_key | string | Template for deduplication key |

dedupe_window | string | Time window for deduplication (e.g., “5m”) |

TriggerInput Options

Trigger input supports two syntaxes: a legacy syntax and a new exports-style syntax.New Exports-Style Syntax (Recommended)

Map multiple event fields to workflow variables using a concise syntax:event_data.<field>- Access parsed event data fields (e.g.,event_data.url,event_data.severity)event.<field>- Access event metadata (event.topic,event.source,event.name,event.id,event.data_type,event.workspace,event.run_uuid,event.workflow_name)function(...)- Transform values using utility functions (e.g.,trim(event_data.desc),lower(event_data.name))

Legacy Syntax

The original input configuration (still supported for backward compatibility):| Type | Description | Fields |

|---|---|---|

event_data | Extract from event payload | field - JSON path to extract |

function | Transform with function | function - JS expression (e.g., jq) |

param | Static parameter | name - parameter name |

file | Read from file | path - file path |

Topic Glob Patterns

Event topics support glob patterns for flexible matching:| Pattern | Description |

|---|---|

* | Matches any sequence of characters |

? | Matches any single character |

[abc] | Matches any character in the set |

JavaScript Filters

Filters are JavaScript expressions evaluated against each event. All filters must returntrue for the event to trigger the workflow.

Available Event Fields

Filter Examples

Common Filter Patterns

Filter Functions

For more advanced filtering, usefilter_functions which provides access to utility functions like contains(), starts_with(), ends_with(), file_exists(), and more:

Available Filter Functions

| Function | Description | Example |

|---|---|---|

contains(str, substr) | Check if string contains substring | contains(event.data.url, '/api/') |

starts_with(str, prefix) | Check if string starts with prefix | starts_with(event.data.severity, 'critical') |

ends_with(str, suffix) | Check if string ends with suffix | ends_with(event.data.url, '.json') |

file_exists(path) | Check if file exists | file_exists("{{event.data.output_path}}/results.json") |

file_length(path) | Get file line count | file_length(event.data.results_file) > 0 |

is_empty(str) | Check if string is empty | !is_empty(event.data.target) |

trim(str) | Remove leading/trailing whitespace | Used in input expressions |

Combining Filters and Filter Functions

You can use bothfilters (basic JS) and filter_functions (with utilities) together. All expressions from both must pass:

Event Envelope Template Variables

Event-triggered workflows have access to special template variables containing the full event data:| Variable | Description |

|---|---|

{{EventEnvelope}} | Full JSON-encoded event envelope |

{{EventTopic}} | Event topic (e.g., “assets.new”) |

{{EventSource}} | Event source (e.g., “nuclei”, “httpx”) |

{{EventDataType}} | Data type (e.g., “subdomain”, “url”) |

{{EventTimestamp}} | Event timestamp (RFC3339 format) |

{{EventData}} | Raw event data payload |

Deduplication

Prevent duplicate workflow triggers using time-windowed deduplication:{{event.source}}-{{event.data.url}}- Composite key{{event.data.hash}}- Simple field

Event Topics Reference

Built-in Topics

| Topic | Description | Typical Source |

|---|---|---|

run.started | Workflow run began | Engine |

run.completed | Workflow run finished | Engine |

run.failed | Workflow run failed | Engine |

step.completed | Individual step finished | Engine |

step.failed | Individual step failed | Engine |

asset.discovered | New asset discovered | Recon tools |

asset.updated | Asset information updated | Scanners |

webhook.received | External webhook received | API |

schedule.triggered | Scheduled workflow triggered | Scheduler |

Custom Topics

Define your own topics for domain-specific events:Building Event Pipelines

Chaining Workflows

Create pipelines where each workflow triggers the next:Stage 1: Subdomain Enumeration

Stage 2: HTTP Probing (Triggered by Stage 1)

Stage 3: Vulnerability Scanning (Triggered by Stage 2)

Error Handling Pattern

Handle failures gracefully with error events:Real-World Examples

Asset Discovery Pipeline

Complete subdomain discovery to live host probing:Vulnerability Notification

Automatically notify on critical findings:External Integration: GitHub Webhooks

Trigger scans on repository pushes:Monitoring Events

Event Logs API

Query event history via the REST API:CLI Event Logs

Query event history using the database CLI:Queue Metrics

The scheduler tracks event processing metrics:| Metric | Description |

|---|---|

events_enqueued | Total events successfully queued |

events_dropped | Events dropped due to full queue |

queue_current_size | Current events waiting |

Event Receiver Status

Check the status of event-triggered workflows:Bulk Event Processing

Process multiple targets discovered via events using thefunc eval bulk processing capabilities.

Processing Targets from File

Using Function Files

Store reusable processing logic in files:Function Call Syntax

Multiple ways to invoke functions:Integration with Event Pipeline

Combine event log queries with bulk function evaluation:Testing Event Functions

Test event generation before deploying workflows:Best Practices

-

Use specific topics - Prefer

assets.subdomainover genericassets.newfor precise filtering - Filter early - Apply filters to reduce processing overhead and prevent unnecessary workflow triggers

- Handle backpressure gracefully - Design workflows to tolerate dropped events during high load

-

Log event errors - Use

on_errorhandlers to track and emit failure events for debugging -

Test triggers disabled first - Set

enabled: falseinitially, validate filters, then enable - Use idempotent handlers - Workflows may receive duplicate events; design steps to handle this

-

Batch file emissions - Use

generate_event_from_filefor bulk discoveries instead of individual events -

Use deduplication - Configure

dedupe_keyanddedupe_windowto prevent duplicate processing - Include workspace - Always pass the workspace parameter to maintain proper event isolation

Troubleshooting

Events Not Triggering

-

Check topic match: Event topic must exactly match trigger configuration

-

Verify trigger is enabled:

enabled: truein workflow YAML -

Test filter expressions: Simplify filters to isolate issues

- Check scheduler is running: Events only process when server is active

-

Check event receiver status:

Events Dropped

-

Check queue metrics: High

events_droppedindicates overload - Reduce event volume: Batch discoveries, filter at source

- Increase queue size: Configure via scheduler settings if needed

- Scale with workers: Distribute processing across workers

Filter Not Matching

-

Verify event data structure: Check actual event payload

-

Test JS expression: Filters use JavaScript syntax

-

Check data types: String vs number comparisons